Short on time? Here’s what you need to know:

✅ Morgan Freeman publicly condemns the unauthorized AI voice cloning mimicking his iconic tone, emphasizing legal actions already underway.

✅ Voice technology misuse raises critical issues around intellectual property, AI ethics, and digital rights enforcement.

✅ The entertainment industry grapples with balancing innovation and protection against unauthorized deepfake audio and AI voice use.

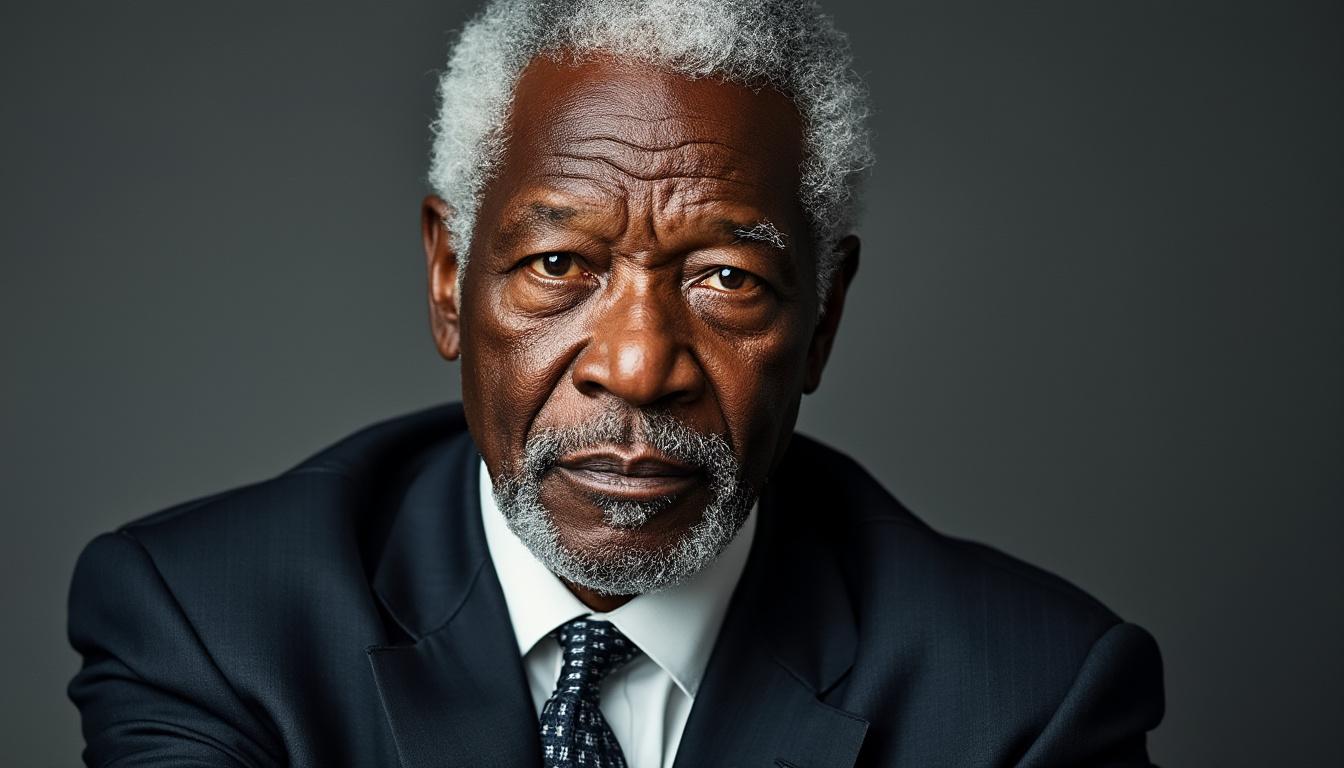

Understanding Morgan Freeman’s Stand Against Unauthorized AI Voice Cloning

Morgan Freeman, celebrated for his gravely resonant voice, has recently voiced strong opposition to the unauthorized use of AI for voice cloning. The issue has escalated to the point where Freeman’s legal team is “very, very busy” addressing multiple infringements where AI-generated voices imitate him without his consent. This concern is not new but has gained momentum in 2025 as voice cloning and deepfake audio technologies rapidly evolve.

Freeman’s frustration centers on the unauthorized replication of his voice by AI systems that leverage voice technology to mimic his speech patterns and tones without any licensing or payment. As he remarked during an interview with TheWrap, “I’m like any other actor: don’t mimic me with falseness. I don’t appreciate it and I get paid for doing stuff like that, so if you’re gonna do it without me, you’re robbing me.”

This highlights an important facet of digital rights: intellectual property extends beyond visual images to the unique characteristics of one’s voice. Voice cloning technology, while innovative, raises ethical questions when used without the individual’s authorization.

Here are some underlying factors making this debate urgent:

- 💥 Rapid AI Voice Technology Growth – Advanced algorithms now can produce near-perfect voice replicas that are hard to distinguish from authentic recordings.

- ⚖️ Legal and Intellectual Property Gaps – Existing copyright laws struggle to address AI-generated voice content adequately.

- 🎭 Impact on Actors’ Livelihoods – Unauthorized AI use may lead to lost professional opportunities for voice artists.

- 🔍 Lack of Clear Consent Frameworks – Many AI-generated creations sidestep obtaining explicit permissions.

| Issue ⚠️ | Effect on Morgan Freeman & Actors 🎬 | Industry Challenge 🚧 |

|---|---|---|

| Unauthorized AI Voice Cloning | Loss of control over personal voice & earnings | Developing effective laws to protect voice rights |

| Deepfake Audio Misuse | Damage to reputation & inaccurate representations | Balancing innovation with ethical safeguards |

| Absence of Clear Policy | Frequent legal disputes and unaddressed violations | Establishing AI ethics & digital rights standards |

These realities confirm why voices, especially iconic ones such as Freeman’s, must be fiercely protected within AI developments. For industry professionals exploring voice technology, understanding these legal battles offers critical insight into the contours of digital rights and the responsibility required in AI innovation.

The Legal Challenges of Unauthorized AI Voice Use in Entertainment

Legal action has become a pivotal response amid unauthorized AI voice use cases involving renowned voices like Morgan Freeman’s. The complexities arise from how intellectual property law intersects with emerging AI-generated content, especially for voice cloning and deepfake audio.

Morgan Freeman’s legal team reportedly is handling “quite a few” incidents of voice imitation this year alone, reflecting a broader landscape where digital rights enforcement has not matured in pace with technology. According to NY Daily News, these actions emphasize the necessity of protecting performers from unauthorized commodification of their voice.

Legal professionals face several challenges when approaching these cases:

- ⚖️ Defining Ownership: Determining who owns a voice when it’s digitally recreated through AI involves debates about personality rights versus intellectual property.

- 🕵️ Proving Unauthorized Use: Establishing that voice replication occurred without consent requires technical forensic evidence, which is still evolving.

- 📜 Navigating Jurisdictional Issues: AI and voice content spread globally; different laws complicate consistent enforcement.

- 💰 Seeking Compensation: Quantifying losses from unauthorized use poses an added legal hurdle.

| Legal Aspect ⚖️ | Description | Challenge in Implementation |

|---|---|---|

| Personality & Voice Rights | Recognition of voice as a personal asset | Limited precedent for AI-generated voice protection |

| Copyright & IP Laws | Apply copyright to voice performances | Adapting old laws to new AI-generated media |

| Enforcement Mechanisms | Monitoring and responding to violations | Technological and legal gaps slow down action |

Following Freeman’s vocal response, industry groups like SAG-AFTRA have highlighted parallel concerns. They have spoken out against unauthorized AI generation of performers without compensation, emphasizing that such creations “jeopardize performer livelihoods and devalue human artistry.” This stance signals the beginning of stronger advocacy and possible institutional safeguards for voice and identity protection in AI technologies.

Ethical Dimensions of AI Voice Use and Deepfake Audio in Public Figures

The rise of AI voice cloning and deepfake audio brings to the fore significant ethical questions, particularly when it involves public figures like Morgan Freeman. Beyond legal disputes, the ethical implications pertain to authenticity, consent, and the potential for misuse.

Voice technology innovations can offer tremendous utility in media, tourism, gaming, and communications, but unethical applications risk damaging trust and personal rights. Freeman’s reaction mirrors broader concerns about AI ethics and its respect for human dignity.

- 🔑 Consent & Agency – Replicating a voice without explicit permission undermines personal agency.

- 🛑 Potential for Misuse – Deepfake audios can spread misinformation or misrepresent someone’s views.

- ⚖️ Transparency in AI-Generated Content – Clear labeling is essential to distinguish AI from real human voices.

- 🎨 Respecting Human Creativity – Ensuring AI complements but does not replace genuine performances.

These ethical precepts are central to ongoing debates on how AI voice use should be regulated. While technology enables new forms of engagement, the industry must uphold principles safeguarding respect for individuals’ identities.

| Ethical Principle 🌍 | Relevance to AI Voice Use | Application Challenges |

|---|---|---|

| Obtaining Clear Consent | Ensures voice is used with approval | Difficult to monitor unauthorized AI recreations |

| Preventing Deceptive Use | Protects from misleading impressions via deepfakes | Detection systems are still imperfect |

| Promoting Transparency | Build trust with audience through disclosures | Not universally applied or legislated |

For professionals developing or adopting voice AI technology, incorporating these ethical frameworks ensures sustainable innovation that respects human rights. Initiatives like identity protection against AI voice theft underline the need for vigilance and integrity in digital voice applications.

The Impact of Morgan Freeman’s Case on Future Voice Technology Regulation

As Morgan Freeman publicly confronts unauthorized use of his distinctive voice, his case serves as a pivotal example influencing voice technology regulation. The high-profile nature of this dispute elevates awareness of digital rights and legal protections needed in the AI era.

Governments, industry coalitions, and technology developers observe these legal battles closely, shaping future policies for AI ethics and intellectual property. Freeman’s active legal response exemplifies how public figures can harness digital rights frameworks to combat unauthorized voice cloning.

- 📌 Increased Regulatory Pressure – Calls for clearer laws defining voice and personality data ownership.

- 🛠️ Development of Monitoring Tools – AI is also used to detect illegal voice cloning.

- 🔎 Stronger Industry Standards – Content creators and AI vendors adopting stricter consent protocols.

- 💡 Awareness Among Creators and Consumers – Educating stakeholders on the risks of deepfake audio and unauthorized AI use.

| Trend 📈 | Effect on Voice Technology | Stakeholder Response |

|---|---|---|

| Legal Actions | Establish case law for voice rights | Actors and lawyers pursuing enforcement |

| Policy Development | Definition of voice as intellectual property | Regulators drafting new laws |

| Technological Innovation | Detection and watermarking solutions | AI developers integrating safeguards |

For professionals in cultural mediation, tourism, and event management using voice technology, Freeman’s case underscores the importance of ethical and legal vigilance. Adopting compliant solutions such as those presented by Grupem helps ensure respect for artists’ digital rights while offering audiences authentic experiences. Explore more about AI voice communication frameworks as part of an informed digital strategy.

Practical Measures for Protecting Voice Rights in the Age of AI

With the ongoing rise of AI-powered voice technologies, strategies to safeguard voice rights have become essential. Morgan Freeman’s legal battles illustrate the urgency for proactive approaches combining technical, legal, and ethical measures.

Professionals deploying voice AI, especially in sectors like tourism and cultural events, should consider the following key practices:

- 🔍 Obtain Explicit Consent – Always secure clear authorizations before using voice data, minimizing unauthorized cloning risks.

- 🛡️ Implement Voice Watermarking – Utilize emerging technologies that embed subtle markers in AI-generated voices, enabling source identification.

- 🧑⚖️ Stay Informed on Legal Developments – Follow evolving voice technology regulations to remain compliant and manage risks.

- 💡 Educate Audiences – Transparently communicate when AI voices are used, building trust with end users.

| Protection Strategy 🛡️ | Benefits | Implementation Tips |

|---|---|---|

| Consent Management | Prevents unauthorized voice use | Use contracts, digital consent forms |

| Voice Watermarking | Enables tracing voice cloning | Adopt vendors with watermark tech |

| Legal Monitoring | Ensures compliance with laws | Engage legal experts specialized in AI |

| Audience Transparency | Enhances trust in AI applications | Clear communication in user interfaces |

These measures align with best practices for safeguarding voice technology recommended by experts in smart audio innovations. In turn, they protect the rights of performers while enabling the ethical deployment of voice AI in diverse cultural and tourism settings.

What is unauthorized AI voice cloning?

Unauthorized AI voice cloning refers to the process of digitally replicating an individual’s voice without their permission, often using deep learning models to create convincing imitations.

Why is Morgan Freeman specifically concerned about AI voice use?

Morgan Freeman’s unique and iconic voice is frequently targeted by AI systems for replication without consent, which affects his intellectual property rights and earnings, prompting legal action.

How are legal teams combating unauthorized AI voice use?

Legal teams pursue cases against companies and individuals who replicate voices without consent, focusing on intellectual property claims, personality rights, and seeking compensation.

What ethical challenges does AI voice technology present?

AI voice technology raises issues around consent, potential misuse for misinformation through deepfake audio, and the need for transparency and respect for human creativity.

How can organizations protect voice rights when using AI?

Organizations should obtain explicit consent, employ voice watermarking technologies, stay updated on legal regulations, and communicate transparently with their audience to maintain trust.